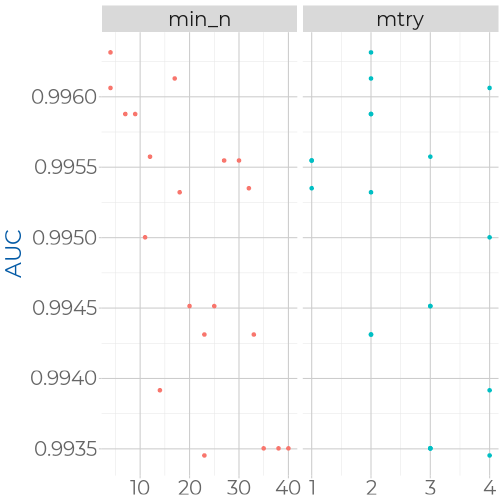

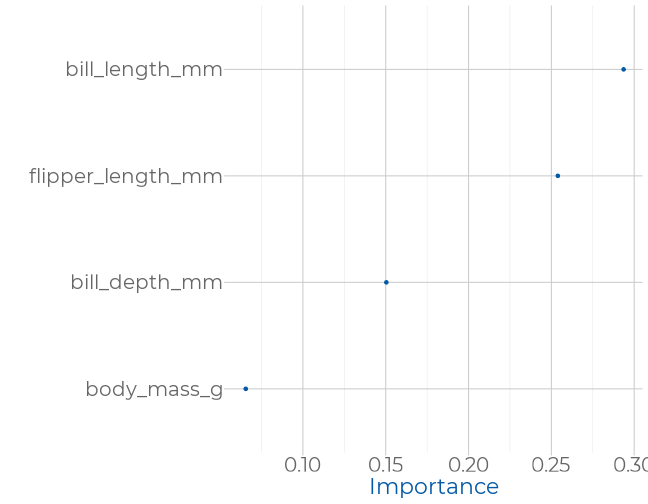

background-image: url(img/latinR-portada.png) background-size: cover class: animated slideInRight fadeOutLeft, middle <style type="text/css"> .xaringan-extra-logo { width: 110px; height: 128px; z-index: 0; background-image: url('img/logo-tidymodels.png'); background-size: contain; background-repeat: no-repeat; position: absolute; top:1em;right:1em; } </style> # 02. Clasificación. Tuneo de Modelos. --- background-image: url(img/latinR-portada.png) background-size: cover class: animated slideInRight fadeOutLeft, middle # Árboles de decisión --- # Árboles de decisión <img src="img/bret-tree.png" width="100%" style="display: block; margin: auto;" /> .footnote[Imagen tomada de https://www.packtpub.com/big-data-and-business-intelligence/machine-learning-r-second-edition] --- ## Hiperparámetro .bg-near-white.b--purple.ba.bw2.br3.shadow-5.ph4.mt5[ ### Un hiperparámetro es una propiedad de un algoritmo de aprendizaje. Este valor influencia la forma en que trabaja el algoritmo. Estos valores no son aprendidos por el algoritmo desde los datos. Deben ser seteados antes de correr el algoritmo por el analista. ] .footnote[The Hundred-page machine learning book. Andriy Burkov] --- ## Hiperparámetros de ## árboles de decisión * **min_n** Establezca n mínimo para dividir en cualquier nodo. Es un método de parada temprana. Se utiliza para evitar el sobreajuste. * **tree_depth** Pone un límite a la profundidad máxima del árbol. Un método para detener el algoritmo. Se utiliza para evitar el sobreajuste. * **cost_complexity** Agrega un costo o penalización a los errores de árboles más complejos. Es una forma de poda. Utilizado para evitar el sobreajuste (overfitting). --- ## Vamos a modelar las especies 🐧 ### Ingreso los datos ```r library(tidymodels) library(palmerpenguins) penguins <- palmerpenguins::penguins %>% drop_na() %>% #elimino valores perdidos select(-year,-sex, -island) #elimino columnas q no son numéricas glimpse(penguins) #observo variables restantes ``` ``` ## Rows: 333 ## Columns: 5 ## $ species <fct> Adelie, Adelie, Adelie, Adelie, Adelie, Adelie, Ade… ## $ bill_length_mm <dbl> 39.1, 39.5, 40.3, 36.7, 39.3, 38.9, 39.2, 41.1, 38.… ## $ bill_depth_mm <dbl> 18.7, 17.4, 18.0, 19.3, 20.6, 17.8, 19.6, 17.6, 21.… ## $ flipper_length_mm <int> 181, 186, 195, 193, 190, 181, 195, 182, 191, 198, 1… ## $ body_mass_g <int> 3750, 3800, 3250, 3450, 3650, 3625, 4675, 3200, 380… ``` --- ### Paso 1: Vamos a dividir el set de datos ```r library(rsample) set.seed(123) #setear la semilla p_split <- penguins %>% initial_split(prop=0.75) # divido en 75% p_train <- training(p_split) p_split ``` ``` ## <Analysis/Assess/Total> ## <250/83/333> ``` ```r # para hacer validación cruzada estratificada p_folds <- vfold_cv(p_train, strata = species) ``` Estos son los datos de entrenamiento/prueba/total * __Vamos a _entrenar_ con 250 muestras__ * __Vamos a _testear_ con 83 muestras__ * __Datos totales: 333__ --- ### Paso 2: Preprocesamiento (receta) ```r #creo la receta recipe_dt <- p_train %>% recipe(species~.) %>% step_corr(all_predictors()) %>% #elimino las correlaciones step_center(all_predictors(), -all_outcomes()) %>% #centrado step_scale(all_predictors(), -all_outcomes()) %>% #escalado prep() recipe_dt #ver la receta ``` ``` ## Data Recipe ## ## Inputs: ## ## role #variables ## outcome 1 ## predictor 4 ## ## Training data contained 250 data points and no missing data. ## ## Operations: ## ## Correlation filter removed no terms [trained] ## Centering for bill_length_mm, ... [trained] ## Scaling for bill_length_mm, ... [trained] ``` --- background-image: url(img/dt-fondo.png) background-size: cover ### Paso 3: Especificar el modelo 🌳 __Modelo de árboles de decisión__ __Vamos a utilizar el modelo por defecto__ ```r #especifico el modelo set.seed(123) vanilla_tree_spec <- decision_tree() %>% #arboles de decisión set_engine("rpart") %>% #librería rpart set_mode("classification") #modo para clasificar vanilla_tree_spec ``` ``` ## Decision Tree Model Specification (classification) ## ## Computational engine: rpart ``` --- background-image: url(img/dt-fondo.png) background-size: cover ### Paso 4: armo el workflow ```r #armo el workflow tree_wf <- workflow() %>% add_recipe(recipe_dt) %>% #agrego la receta add_model(vanilla_tree_spec) #agrego el modelo tree_wf ``` ``` ## ══ Workflow ══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════ ## Preprocessor: Recipe ## Model: decision_tree() ## ## ── Preprocessor ────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── ## 3 Recipe Steps ## ## ● step_corr() ## ● step_center() ## ● step_scale() ## ## ── Model ───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────── ## Decision Tree Model Specification (classification) ## ## Computational engine: rpart ``` --- background-image: url(img/dt-fondo.png) background-size: cover ### Paso 5: Ajuste de la función ```r #modelo vanilla sin tunning set.seed(123) vanilla_tree_spec %>% fit_resamples(species ~ ., resamples = p_folds) %>% collect_metrics() #desanidar las metricas ``` ``` ## # A tibble: 2 x 5 ## .metric .estimator mean n std_err ## <chr> <chr> <dbl> <int> <dbl> ## 1 accuracy multiclass 0.951 10 0.0123 ## 2 roc_auc hand_till 0.970 10 0.00794 ``` --- background-image: url(img/dt-fondo.png) background-size: cover #### Paso 5.1: vamos a especificar 2 hiperparámatros ```r set.seed(123) trees_spec <- decision_tree() %>% set_engine("rpart") %>% set_mode("classification") %>% set_args(min_n = 20, cost_complexity = 0.1) #especifico hiperparámetros trees_spec %>% fit_resamples(species ~ ., resamples = p_folds) %>% collect_metrics() ``` ``` ## # A tibble: 2 x 5 ## .metric .estimator mean n std_err ## <chr> <chr> <dbl> <int> <dbl> ## 1 accuracy multiclass 0.951 10 0.0123 ## 2 roc_auc hand_till 0.970 10 0.00794 ``` --- background-image: url(img/dt-fondo.png) background-size: cover # Ejercicio .bg-near-white.b--dark-blue.ba.bw2.br3.shadow-5.ph4.mt5[ #### 1. ¿Por qué es el mismo valor obtenido en los dos casos? #### 2. Dejando fijo el valor de min_n=20, pruebe C=1, C=0.5 y C=0. #### 3. Dejando fijo el valor de C=0, pruebe min_n 1 y 5. ] <div class="countdown" id="timer_5f7b6f1b" style="right:0;bottom:0;" data-warnwhen="0"> <code class="countdown-time"><span class="countdown-digits minutes">05</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">00</span></code> </div> --- background-image: url(img/dt-fondo.png) background-size: cover ### Paso 6: Predicción del modelo 🔮 ```r #utilizamos la funcion last_fit junto al workflow y al split de datos final_fit_dt <- last_fit(tree_wf, split = p_split ) final_fit_dt %>% collect_metrics() ``` ``` ## # A tibble: 2 x 3 ## .metric .estimator .estimate ## <chr> <chr> <dbl> ## 1 accuracy multiclass 0.880 ## 2 roc_auc hand_till 0.924 ``` --- background-image: url(img/dt-fondo.png) background-size: cover ### Paso 6.1: matriz de confusión ```r final_fit_dt %>% collect_predictions() %>% conf_mat(species, .pred_class) #para ver la matriz de confusión ``` ``` ## Truth ## Prediction Adelie Chinstrap Gentoo ## Adelie 35 0 0 ## Chinstrap 5 9 2 ## Gentoo 1 2 29 ``` -- ```r final_fit_dt %>% collect_predictions() %>% sens(species, .pred_class) #sensibilidad global del modelo ``` ``` ## # A tibble: 1 x 3 ## .metric .estimator .estimate ## <chr> <chr> <dbl> ## 1 sens macro 0.869 ``` --- background-image: url(img/dt-fondo.png) background-size: cover # Ejercicio .bg-near-white.b--dark-blue.ba.bw2.br3.shadow-5.ph4.mt5[ #### Repetir estos pasos para el modelo de C=0 y min_n=5 ] <div class="countdown" id="timer_5f7b724e" style="right:0;bottom:0;" data-warnwhen="0"> <code class="countdown-time"><span class="countdown-digits minutes">05</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">00</span></code> </div> --- background-image: url(img/dt-fondo.png) background-size: cover ## Resumiendo .left-column[ ### Paso 1: __Dividimos los datos__ * initial_split() ] .right-column[ <img src="img/rsample.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/dt-fondo.png) background-size: cover ## Resumiendo .left-column[ ### Paso 2: __Preprocesamiento de los datos__ * step_*() ] .right-column[ <img src="img/recipes.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/dt-fondo.png) background-size: cover ## Resumiendo .left-column[ ### Paso 3: __Especificamos el modelo y sus args__ * set_engine() * mode() ] .right-column[ <img src="img/parsnip.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/dt-fondo.png) background-size: cover ## Resumiendo .left-column[ ### Paso 4: __Armamos el workflow con la receta y el modelo__ * workflow() * add_recipe() * add_model() ] .right-column[ <img src="img/workflow.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/dt-fondo.png) background-size: cover ## Resumiendo .left-column[ ### Paso 5: Tune __Tuneo de los hiperparámetros__ * last_fit() ] .right-column[ <img src="img/tune.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/dt-fondo.png) background-size: cover ## Resumiendo .left-column[ ### Paso 6: __Predicción y comparación de las métricas__ * collect_metrics() * collect_predictions() + conf_mat() ] .right-column[ <img src="img/yardstick.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/latinR-portada.png) background-size: cover class: animated slideInRight fadeOutLeft, middle # Random Forest --- # Random Forest <img src="img/random-forest1.png" width="90%" style="display: block; margin: auto;" /> .footnote[Imagen tomada de https://conf20-intro-ml.netlify.com/] --- # Bootstraping <img src="img/random-forest2.png" width="100%" style="display: block; margin: auto;" /> .footnote[Imagen tomada de https://conf20-intro-ml.netlify.com/] --- # Bagging <img src="img/random-forest3.png" width="100%" style="display: block; margin: auto;" /> .footnote[Imagen tomada de] --- ### Veamos que sucede al clasificar el conjunto de testeo <img src="img/random-forest4.png" width="100%" style="display: block; margin: auto;" /> .footnote[Imagen tomada de https://conf20-intro-ml.netlify.com/] --- ### Voto mayoritario (majority vote) <img src="img/random-forest5.png" width="100%" style="display: block; margin: auto;" /> .footnote[Imagen tomada de https://conf20-intro-ml.netlify.com/] --- ### Hiperparámetros de ### random forest ### mtry * El número de predictores a muestrearse en cada split de árbol -- ### min_n * el número de observaciones necesarias para seguir dividiendo nodos --- background-image: url(img/rf-fondo.png) background-size: cover ### Paso 2: Preprocesamos los datos ```r p_recipe <- training(p_split) %>% recipe(species~.) %>% step_corr(all_predictors()) %>% step_center(all_predictors(), -all_outcomes()) %>% step_scale(all_predictors(), -all_outcomes()) %>% prep() p_recipe ``` ``` ## Data Recipe ## ## Inputs: ## ## role #variables ## outcome 1 ## predictor 4 ## ## Training data contained 250 data points and no missing data. ## ## Operations: ## ## Correlation filter removed no terms [trained] ## Centering for bill_length_mm, ... [trained] ## Scaling for bill_length_mm, ... [trained] ``` --- background-image: url(img/rf-fondo.png) background-size: cover ### Paso 3: Especificar el modelo ```r rf_spec <- rand_forest() %>% set_engine("ranger") %>% set_mode("classification") ``` --- ### Veamos como funciona el modelo sin tunning ```r set.seed(123) rf_spec %>% fit_resamples(species ~ ., resamples = p_folds) %>% collect_metrics() ``` ``` ## # A tibble: 2 x 5 ## .metric .estimator mean n std_err ## <chr> <chr> <dbl> <int> <dbl> ## 1 accuracy multiclass 0.972 10 0.0108 ## 2 roc_auc hand_till 0.996 10 0.00192 ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Random Forest con mtry=2 🔧 ```r rf2_spec <- rf_spec %>% set_args(mtry = 2) set.seed(123) rf2_spec %>% fit_resamples(species ~ ., resamples = p_folds) %>% collect_metrics() ``` ``` ## # A tibble: 2 x 5 ## .metric .estimator mean n std_err ## <chr> <chr> <dbl> <int> <dbl> ## 1 accuracy multiclass 0.972 10 0.0108 ## 2 roc_auc hand_till 0.996 10 0.00192 ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Random Forest con mtry=3 🔧 ```r rf3_spec <- rf_spec %>% set_args(mtry = 3) set.seed(123) rf3_spec %>% fit_resamples(species ~ ., resamples = p_folds) %>% collect_metrics() ``` ``` ## # A tibble: 2 x 5 ## .metric .estimator mean n std_err ## <chr> <chr> <dbl> <int> <dbl> ## 1 accuracy multiclass 0.967 10 0.0104 ## 2 roc_auc hand_till 0.997 10 0.00115 ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Random Forest con mtry=4 🔧 ```r rf4_spec <- rf_spec %>% set_args(mtry = 4) set.seed(123) rf4_spec %>% fit_resamples(species ~ ., resamples = p_folds) %>% collect_metrics() ``` ``` ## # A tibble: 2 x 5 ## .metric .estimator mean n std_err ## <chr> <chr> <dbl> <int> <dbl> ## 1 accuracy multiclass 0.964 10 0.00972 ## 2 roc_auc hand_till 0.996 10 0.00147 ``` --- background-image: url(img/fondo-conceptos.png) background-size: cover class: animated slideInRight fadeOutLeft, middle ### En la realidad lo que uno realiza es un tuneo de los hiperparámetros del modelo de manera automática --- background-image: url(img/rf-fondo.png) background-size: cover ### Tuneo de hiperparámetros automático 🔧 ```r tune_spec <- rand_forest( mtry = tune(), trees = 1000, min_n = tune() ) %>% set_mode("classification") %>% set_engine("ranger") tune_spec ``` ``` ## Random Forest Model Specification (classification) ## ## Main Arguments: ## mtry = tune() ## trees = 1000 ## min_n = tune() ## ## Computational engine: ranger ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Workflows ```r tune_wf <- workflow() %>% add_recipe(p_recipe) %>% add_model(tune_spec) set.seed(123) cv_folds <- vfold_cv(p_train, strata = species) tune_wf ``` ``` ## ══ Workflow ══════════════════════════════════════════════════════════════════════════════════════════════════ ## Preprocessor: Recipe ## Model: rand_forest() ## ## ── Preprocessor ────────────────────────────────────────────────────────────────────────────────────────────── ## 3 Recipe Steps ## ## ● step_corr() ## ● step_center() ## ● step_scale() ## ## ── Model ───────────────────────────────────────────────────────────────────────────────────────────────────── ## Random Forest Model Specification (classification) ## ## Main Arguments: ## mtry = tune() ## trees = 1000 ## min_n = tune() ## ## Computational engine: ranger ``` --- background-image: url(img/rf-fondo.png) background-size: cover ### Paralelizamos los cálculos ```r doParallel::registerDoParallel() set.seed(123) tune_res <- tune_grid( tune_wf, resamples = cv_folds, grid = 20 ) tune_res ``` ``` ## # Tuning results ## # 10-fold cross-validation using stratification ## # A tibble: 10 x 4 ## splits id .metrics .notes ## <list> <chr> <list> <list> ## 1 <split [224/26]> Fold01 <tibble [40 × 6]> <tibble [0 × 1]> ## 2 <split [224/26]> Fold02 <tibble [40 × 6]> <tibble [0 × 1]> ## 3 <split [224/26]> Fold03 <tibble [40 × 6]> <tibble [0 × 1]> ## 4 <split [224/26]> Fold04 <tibble [40 × 6]> <tibble [0 × 1]> ## 5 <split [224/26]> Fold05 <tibble [40 × 6]> <tibble [0 × 1]> ## 6 <split [225/25]> Fold06 <tibble [40 × 6]> <tibble [0 × 1]> ## 7 <split [225/25]> Fold07 <tibble [40 × 6]> <tibble [0 × 1]> ## 8 <split [226/24]> Fold08 <tibble [40 × 6]> <tibble [0 × 1]> ## 9 <split [227/23]> Fold09 <tibble [40 × 6]> <tibble [0 × 1]> ## 10 <split [227/23]> Fold10 <tibble [40 × 6]> <tibble [0 × 1]> ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Rango de valores para min_n y mtry <!-- --> --- background-image: url(img/rf-fondo.png) background-size: cover ## Elijo el mejor modelo 🏆 * Con la función select_best ```r best_auc <- select_best(tune_res, "roc_auc") final_rf <- finalize_model( tune_spec, best_auc ) final_rf ``` ``` ## Random Forest Model Specification (classification) ## ## Main Arguments: ## mtry = 2 ## trees = 1000 ## min_n = 4 ## ## Computational engine: ranger ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Valores finales ```r set.seed(123) final_wf <- workflow() %>% add_recipe(p_recipe) %>% add_model(final_rf) final_res <- final_wf %>% last_fit(p_split) final_res %>% collect_metrics() ``` ``` ## # A tibble: 2 x 3 ## .metric .estimator .estimate ## <chr> <chr> <dbl> ## 1 accuracy multiclass 0.988 ## 2 roc_auc hand_till 1 ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Matriz de Confusión ```r final_res %>% collect_predictions() %>% conf_mat(species, .pred_class) ``` ``` ## Truth ## Prediction Adelie Chinstrap Gentoo ## Adelie 41 0 0 ## Chinstrap 0 11 1 ## Gentoo 0 0 30 ``` --- background-image: url(img/rf-fondo.png) background-size: cover ## Ejercicio 3 .bg-near-white.b--dark-blue.ba.bw2.br3.shadow-5.ph4.mt5[ #### Con el set de datos de iris realice una clasificación con random forest. ] <div class="countdown" id="timer_5f7b6fbe" style="right:0;bottom:0;" data-warnwhen="0"> <code class="countdown-time"><span class="countdown-digits minutes">10</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">00</span></code> </div> --- ## Resumiendo .left-column[ ### Paso 2: Recipes __Preprocesamiento de los datos__ ] .right-column[ <img src="img/recipes.png" width="40%" style="display: block; margin: auto;" /> ] --- ## Resumiendo .left-column[ ### Paso 3: Parsnip __Especificamos el modelo y sus args__ ] .right-column[ <img src="img/parsnip.png" width="40%" style="display: block; margin: auto;" /> ] --- ## Resumiendo .left-column[ ### Paso 4: Workflow __Armamos el workflow con la receta y el modelo__ ] .right-column[ <img src="img/workflow.png" width="40%" style="display: block; margin: auto;" /> ] --- ## Resumiendo .left-column[ ### Paso 5: Tune __Tuneo de los hiperparámetros__ ] .right-column[ <img src="img/tune.png" width="40%" style="display: block; margin: auto;" /> ] --- ## Resumiendo .left-column[ ### Paso 6: __Predicción y comparación de las métricas__ ] .right-column[ <img src="img/yardstick.png" width="40%" style="display: block; margin: auto;" /> ] --- background-image: url(img/rf-fondo.png) background-size: cover ### Importancia de las variables * libreria vip ```r library(vip) set.seed(123) final_rf %>% set_engine("ranger", importance = "permutation") %>% fit(species ~ ., data = juice(p_recipe)) %>% vip(geom = "point")+ theme_xaringan() ``` --- background-image: url(img/rf-fondo.png) background-size: cover ### Gráfico <!-- --> --- ### Ejemplos (un poco) más reales #### [Tuning random forest hyperparameters with #TidyTuesday trees data](https://juliasilge.com/blog/sf-trees-random-tuning/) <br><br><br><br> #### [Hyperparameter tuning and #TidyTuesday food consumption](https://juliasilge.com/blog/food-hyperparameter-tune/) --- background-image: url(img/biblio.png) background-size: cover # Bibliografía #### Max Kuhn & Julia Silge - [Tidy Modeling (en desarrollo)](https://www.tmwr.org/) #### Julia Silge's [Personal Blog](https://juliasilge.com/blog/) #### Max Kuhn & Kjell Johnson - [Feature engineering and Selection: A Practical Approach for Predictive Models](http://www.feat.engineering/) #### Max Kuhn & Kjell Johnson - Applied Predictive Modeling #### Documentación de [`tidymodels`](https://www.tidymodels.org/) #### Alison Hill [rstudio conf](https://conf20-intro-ml.netlify.app/materials/) y [curso virtual](https://alison.rbind.io/post/2020-06-02-tidymodels-virtually/) material --- background-image: url(img/final-fondo.png) background-size: cover class: middle # Muchas gracias 🤖